Introduction

Catch up on last weeks post on the Impact of Kubernetes on Network Administrators here.

Container based microservice applications, provide benefits to the development and operation of applications and Kubernetes is the most popular platform for container management and orchestration. The move to this application architecture results in a number of changes as discussed here. One of the main considerations for a network administrator is how to manage and maintain the availability of the application to users and specifically the traffic path into the Kubernetes cluster to reach the relevant microservices. There are a number of options of how this can be done.

Deployments and Services

Firstly lets mention the two most important objects in Kubernetes – Deployments and Services.

A Deployment is how the desired state of an application is defined including the image to use and number of instance replicas that should run. Once the instances are created, a Kubernetes Deployment Controller continuously monitors those instances ensuring this state is maintained.

A Service on the other hand is how policies for accessing an application can be defined. Since microservices typically mean an application sis broken down into many sub components different parts of the application may be exposed using different service types, depending on if external access is needed (for example a frontend web page component) or if the microservice is only accessible to other microservices (for example an internal database).

Let’s examine the different Service Types and how to effect traffic routing into the Kubernetes Cluster – an important consideration for any Network Administrator.

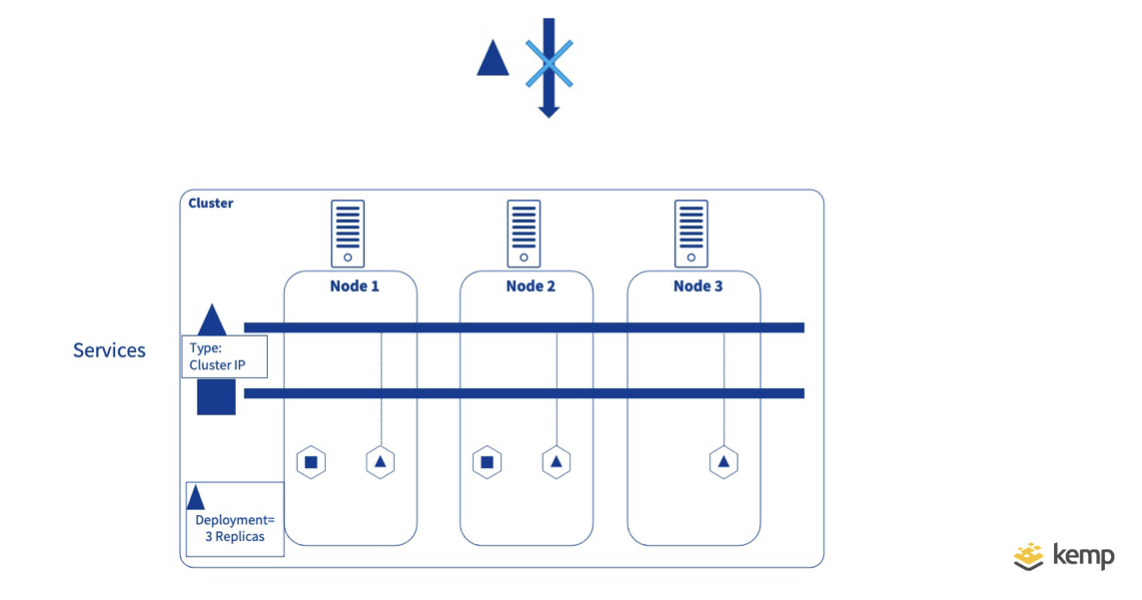

ClusterIP

Cluster IP is the default Service type. This exposes the Service on an internal IP address within the Kubernetes cluster. This service type is typically only reachable from within the Kubernetes cluster.

Obviously this is not useful if you want to expose a service for outside consumption. However considering an application broken up into many microservices, it is most likely a large number of services will only be consumed by other microservices and therefore external exposure is not required.

For frontend services that need to be reachable from outside, there are a number of alternative options.

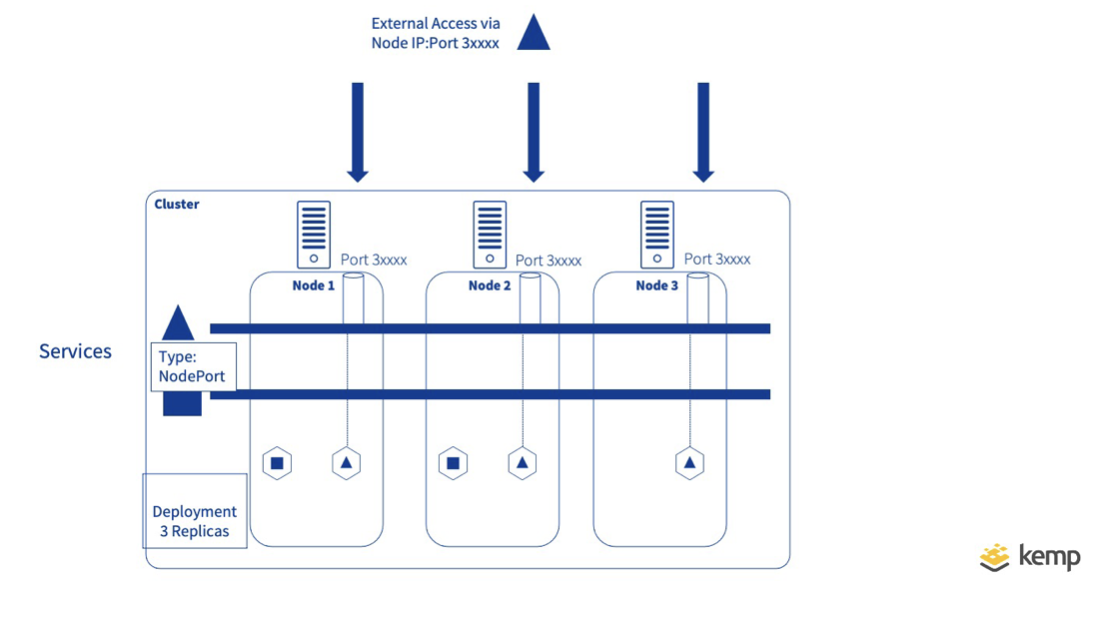

NodePort

NodePort extends type Cluster IP, by mapping the internal IP address and port number to an external port on each Kubernetes node. Since nodes are servers (Virtual or hardware) that are routable outside of Kubernetes, users can simply access one of the nodes on the specified port to access the service. Every node in the Kubernetes cluster will listen on the unique port assigned to each “Node Port” Service and will translate requests received to the internal cluster IP using Network Address Translation(NAT).

NodePort presents some challenges. Firstly when traffic arrives on a NodePort, there is no guarantee that a pod instance for the microservice exists on the node meaning traffic may have to be forwarded via a second node. (This node-node forwarding can be prevented by setting externalTrafficPolicy to local but would result in no response to the request)

Another challenge is, if as is common, a large number of web service need to be exposed, since each service needs a unique port number, keeping track of what web service is available on what port is difficult and known ports like 80/443 cannot be used multiple times.

NodePort options can be used to increase the efficiency of traffic routing. The LocalService Policy for example will force a node to only accept traffic if a running instance of the service is contained on the Node minmising east west traffic.

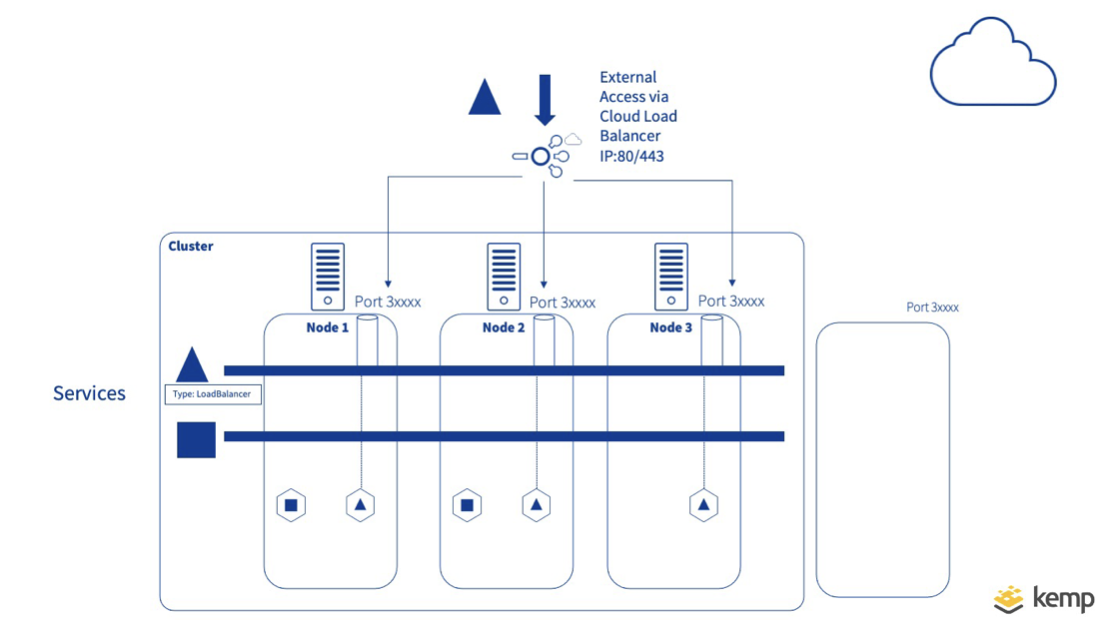

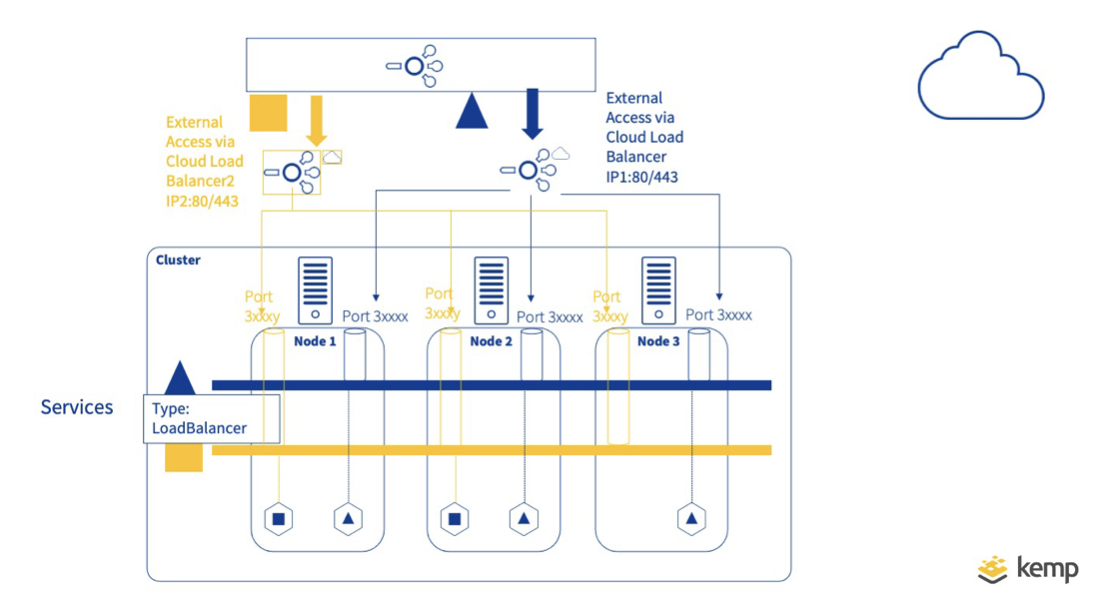

LoadBalancer

The issue of publishing Services on know ports can be solved in many Cloud Provider Kubernetes solutions with our next Service Type – LoadBalancer. When specified in the Service definition, and where the Cloud Provider supports it, an external load balancer is created in the Cloud and assigns a fixed, external IP for enabling external access. This Load Balancer can be published on a well known port (80/443) and distributes traffic across nodeports, hiding the internal ports used from the user.

While this is a useful options there are a number of challenges.

First of all type load balancer is assumes a Cloud Kubernetes platform that supports type Loadbalancer. Secondly, since this is done on a per service level, multiple cloud load balancers may be created all which may incur costs. Where an organisation may only have one specific Public IP address it is often the case that a proxy is needed in front of the service specific load balancer(s) to directs traffic to different based on URL or hostname used. Where N Services are being made accessible, N+1 Load balancers will be required which may not be scalable.

Ingress Controller

To solve the scalability challenges of type load balancer there is the Ingress controller. Ingress Controller enables a single end point to be mapped to multiple applications. This is done by configuring an Ingress Resource in Kubernetes by using the hostname/paths for directing traffic to specific services. The ingress controller can be implemented within Kuberntes as a container or external to Kubernetes.

When the Ingress Controller is run as a container, this brings with it all the benefits of containerisation to the Ingress Controller. The Ingress Controller itself will typically be exposed as type Nodeport but since it includes the traffic routing rules as defined by the ingress resource, multiple services can be mapped to a single port (e.g. 80/443). Ingress Controllers may themselves be placed behind an external Load balancer or Reverse proxy (not to be confused with the service type load balancer defined above) which distributes traffic across the Kubernetes nodes.

The Ingress controller may also be fulfilled outside of the Kubernetes Cluster as is the approach used by Kemp Ingress Controller. With this approach the rules are still defined in the ingress Resource in Kubernetes, but an external device will fulfil the functionality of directing the traffic to the correct pods on the correct node. This approach may require some route configuration in order to send traffic to the correct Nodes to reach specific pods, but benefits from an efficient path for inbound traffic

Summary

In the next section we will examine the Ingress Controller functionality in more detail and the different options for delivering this.

To take full advantage of Kemp Ingress Controller functionality see here…

The Ingress Controller Series

- WHAT IS KUBERNETES?

- IMPACT OF KUBERNETES ON NETWORK ADMINISTRATORS

- EXPOSING KUBERNETES SERVICES

- THE KEMP INGRESS CONTROLLER EXPLAINED

- A HYBRID APPROACH TO MICROSERVICES WITH KEMP

- MANAGING THE RIGHT LEVEL OF ACCESS BETWEEN NETOPS AND DEV FOR MICROSERVICE PUBLISHING

Upcoming Webinar

For more information, check out our upcoming webinar: Integrating Kemp load balancing with Kubernetes.